Vision Algorithms

We have a large toolbox of state-of-the-art image processing algorithms which are selected and combined as required for the individual inspection task. Furthermore, each inspection setup, which consists of hard- and software, is tailored to the process and the individual inspection task for the best possible outcome. A detailed investigation of the defect catalogue and pre-engineering in our vision lab define the algorithms and detection rates to be expected at an early stage of the project.

Defect classification

Our approach is not to teach defects, but to classify defects from their appearance.

We pursue a mind change in inspection philosophy which is based on the fact that a particular defect is observed many times depending on the view, but a good product, lacking a similar particular feature, is only observed once. This means the variation of good products determines the inspection capabilities, while the singular appearance of a defect does not compromise the classification.

This leads to a very low false reject rate, due to the fact that the system is trained to recognize the normal variation of a good product and classifies this as good, and therefore classifies everything which does not comply with the normal variation as defect/reject.

During the second stage of the evaluation, after the good/reject decision, the defects can be sub-categorized by several individual parameters which can be deduced from the visual appearance of the product and the related defect category - particle in solution or particle in glass, or scratch depth. Individual inspection stations evaluate their images independently at high speeds of up to 600/min, and defect properties are communicated to obtain a third classification level by evaluating differences from picture to picture of the same product.

Particle inspection

For particle inspection different setups are needed. Particles can swim, float, sink and be adhered to any inner surface of the test sample. For swimming and sunken particles other inspection setups and strategies are used than for floating particles. There is no “one-size-fits-all” solution, but the optical setup as well as the inspection strategy and the used algorithms must be chosen to fit the individual task at hand. There are a number of influences, the most significant are: viscosity of the filling, interface physics of the filling and the container (wetting or not), surface tension, container conditions, fill level, and many more. All of the different influences strengthen our approach for a profound pre-engineering study.

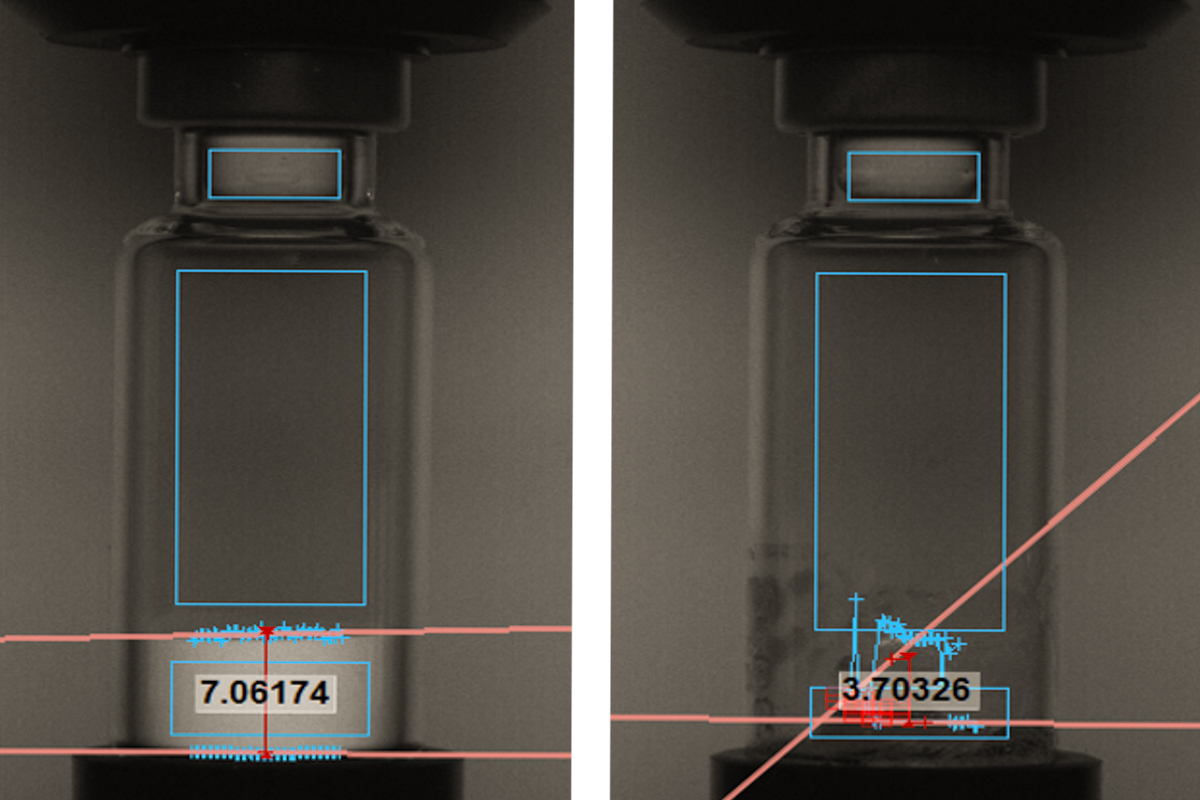

Floating particulates are inspected using image subtraction and particle tracking algorithms. High-resolution image sequences are recorded while the liquid inside the container is rotating and bringing potential particles in movement. The images taken are then subtracted from each other leaving differences in between the images behind. Based on these particle sizes and the movement, directions can be determined. Spinning profiles are adjusted to different particle types and liquid characteristics during the recipe development phase.

Deep Learning and Artificial Intelligence (AI)

In recent years artificial intelligence has brought significant changes to image processing. We are using deep learning algorithms to improve inspection results. Especially for tasks where conventional image processing faces challenges, using an AI can improve detection rates and minimize false rejects drastically. There are specific fields where Deep Learning has shown significant improvements such as the differentiation between bubbles and particles as well as the particle inspection in highly viscous products where spinning and image subtraction does not function in the usual way.

A pre-requisite for supervised Deep Learning is a comprehensive and classified defect library that is being taught to the system and on which the algorithms start to learn and improve. In order to create such defect libraries over a longer period of time we have developed a software tool that centrally stores images of real defects with the classification accordingly. Please get in touch with us for further information.

Looking for more information about our AVI capabilities?

We are happy to advise you personally.